How to Get Data from a Website Without Getting Blocked

There is an insane amount of data on the internet, with a report saying that companies generate about 2,000,000,000,000,000,000 bytes of data every day.

However, without effective tools and processes, this huge amount of data will only remain on the internet instead of being put to gainful use by enterprises worldwide.

The best ways to extract these data are web scraping and web crawling. They use tools and software to help brands quickly extract the right data at the right time.

Web crawling helps to gather all the right URLs and hyperlinks to be used for collecting the data. But it is a process with its unique challenges.

In this article, we start by explaining how web crawlers benefit businesses and finish with how to crawl a website without getting blocked.

What is Web Crawling?

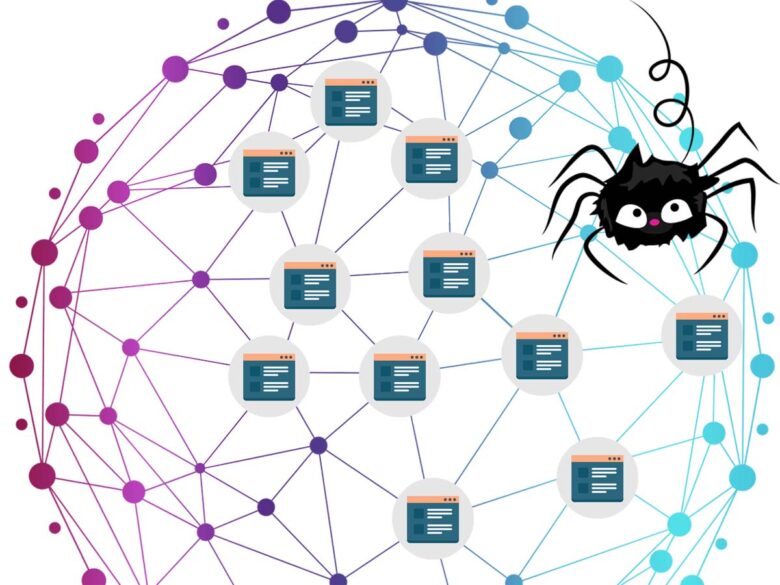

Web crawling is navigating websites and webpages and indexing the URLs found along the way.

The indexing helps to arrange the URLs in sequential order. Web scraping or data extraction can go smoothly and collect only the necessary data without wasting too much time sorting through different links.

The end product of web crawling is usually a list of URLs and other important data fields. The output of web crawling is then fed into web scrapers to get the data that businesses need.

What Are Web Crawlers?

Web crawlers can be defined as tools or software that can easily navigate and crawl websites and webpages to gather related URLs and hyperlinks.

The tools are also generally called web spiders because of how they function, moving from one website or webpage to the other until they have indexed the content and links contained in those.

There are different web crawlers, each having its advantages and disadvantages. And below, we will consider the three broad groups of crawlers.

Crawlers As Website APIs

These are the simplest groups of crawlers that function using APIs provided by the websites. It makes the job easier for the bot as the receiving website can easily grant access.

Pros:

- They are very fast and can be used to achieve a lot in a short time

- Connectivity is simple and based on the internet

- The data is more reliable as it is coming from a known website itself

Cons:

- Highly restrictive as it can only scrape what the website allows

- It could be difficult to operate by non-programmers who have never worked with APIs

- It may pose a security risk for the receiving program

- The cost of maintenance is often higher than what is attainable with other kinds of crawlers

Self-Built Crawlers

Another group of crawlers is those built and owned by various brands. These are more flexible and easy to customize, with the bulk of the responsibility and maintenance falling on the owners.

Pros:

- Highly customizable and can be used to perform more tasks and operations

- It can be easily integrated with tools such as proxies to guarantee protection and avoid getting blocked

- It can be built with frameworks and libraries that even beginners can work with

Cons:

- Requires time and energy to build and launch

- Maintenance is usually the responsibility of the owners

- It May be harder to operate for those with zero programming skills

Ready-to-Use Crawlers

This is a tool or software built and managed by a third party used for navigating the internet. They are run and managed by the developers but may cost a huge amount to acquire.

Pros:

- Easy to work with as it doesn’t require the business owner to write codes

- It is easy to customize and can work with just about any website and platforms

- Eliminates the cost of maintenance

Cons:

- May not be as flexible as those developed by the brand itself

- Requires time to be learned and properly operated

- May cost enough to be harder for smaller enterprises to own

Benefits of Web Crawlers

Crawlers are beneficial for businesses all over the world, and below are some of the most obvious benefits:

Brand Monitoring

Brands need to watch where their names are being mentioned on the internet. This helps them stay on top of comments and reviews and catch the negative ones before damaging their reputation.

Web crawlers can be used to crawl several platforms and forums to collect these reviews early enough and in large quantities.

Lead Generation

Generating leads often help a company keep a list of potential buyers they can sell to in the future.

An enterprise with no leads will soon run out of customers. Crawlers are useful for navigating major e-Commerce websites to gather details of people most likely to patronize the business.

Market Trend Monitoring

Understanding what is happening at any point in the market is critical to helping businesses maximize profits and reduce losses.

A brand can monitor market trends and collect what is needed using the best web crawlers.

How to Crawl a Website without Getting Blocked

If you are looking for how to crawl a website without getting blocked, the following tips and practices should help or check the information in a blog post like this one:

Use Proxies

Proxies are a critical tool for avoiding getting blocked on the internet. They help you switch between IPs and location to prevent websites from knowing that subsequent requests are from the same user.

Schedule Crawling

Another way to crawl websites without getting blocked is to schedule a time for crawling. Crawling during peak hours puts too much pressure on the site and inspires several defense mechanisms that block you.

Change Crawling Patterns

You can also easily avoid getting blocked by changing the pattern you use in crawling. Repeating the same pattern makes it easy for the website to identify you and ban your activities.

But a simple change in pattern can make it difficult to recognize you.

Conclusion

Getting banned during web crawling puts a stop to your operations and impedes the process of data collection, leaving you with less data than you need.

The steps highlighted above will get you through these hurdles if you are looking for how to crawl a website without getting blocked.